As an organizer for the DevSlop Game Day, I couldn’t participate in the CTF itself (bummer!) so I chose to walk through the challenges prior to the event to ensure that they are solvable and easy to comprehend. I personally had no experience with Kubernetes prior to organizing this CTF, therefore, it was a perfect chance to learn by doing.

The CTF is designed with beginners in mind. The aim is to provide enough information to the learner so that they can end up with the solution on their own. What I personally love about this kind of CTF is that it provides learners with a bigger picture. One is not simply deploying a pod, but they are tasked with building a realistic application that encompasses the several components interconnected. A learner who is able to complete all tasks leaves the CTF having an overall idea of how microservices are built to interact with other components as part of a whole.

The walk through below was created a couple of days before the CTF was launched as part of my attempt to solve the challenges myself. Anyway, enough rant. Let’s dive right in.

Table of Contents

- Getting Started

- Building Docker Images

- Pushing Docker Images to Amazon ECR Repositories

- Configuring Environment Variables with ConfigMaps and Secrets

- Deploying Services

- Deploying Microservices to Kubernetes

- Deploying Redis

- Configuring Ingress For The Front-End

- Securing the Cluster with Network Policies

Getting Started

The DevSlop Game Day Announcement advised us to install the following tools prior to Game Day:

- The AWS CLI version 2

- The Kubernetes command-line tool

- Docker

- An IDE or Text Editor of choice eg.Visual Studio Code, Sublime Text, Atom etc.

The instructions provided detail how to set those tools up per OS. I already had these all preinstalled on my Ubuntu desktop.

Introduction (1 Point)

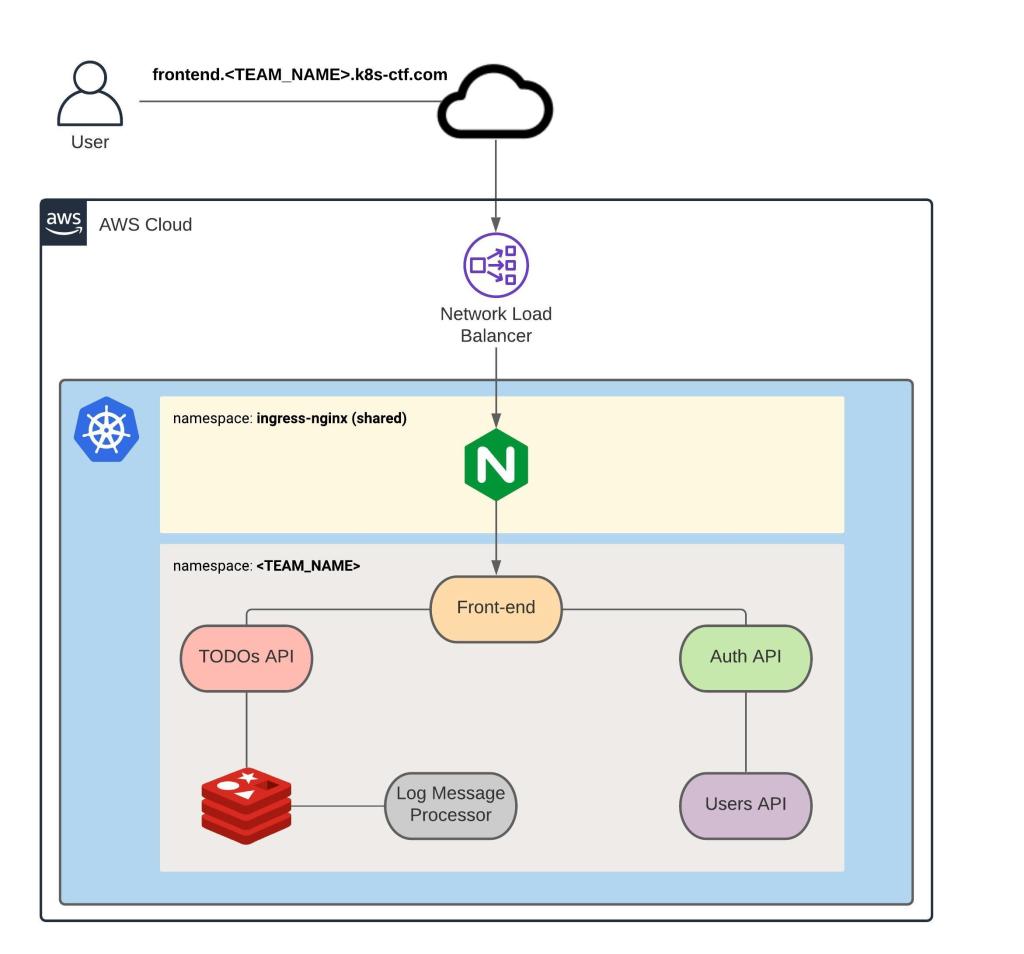

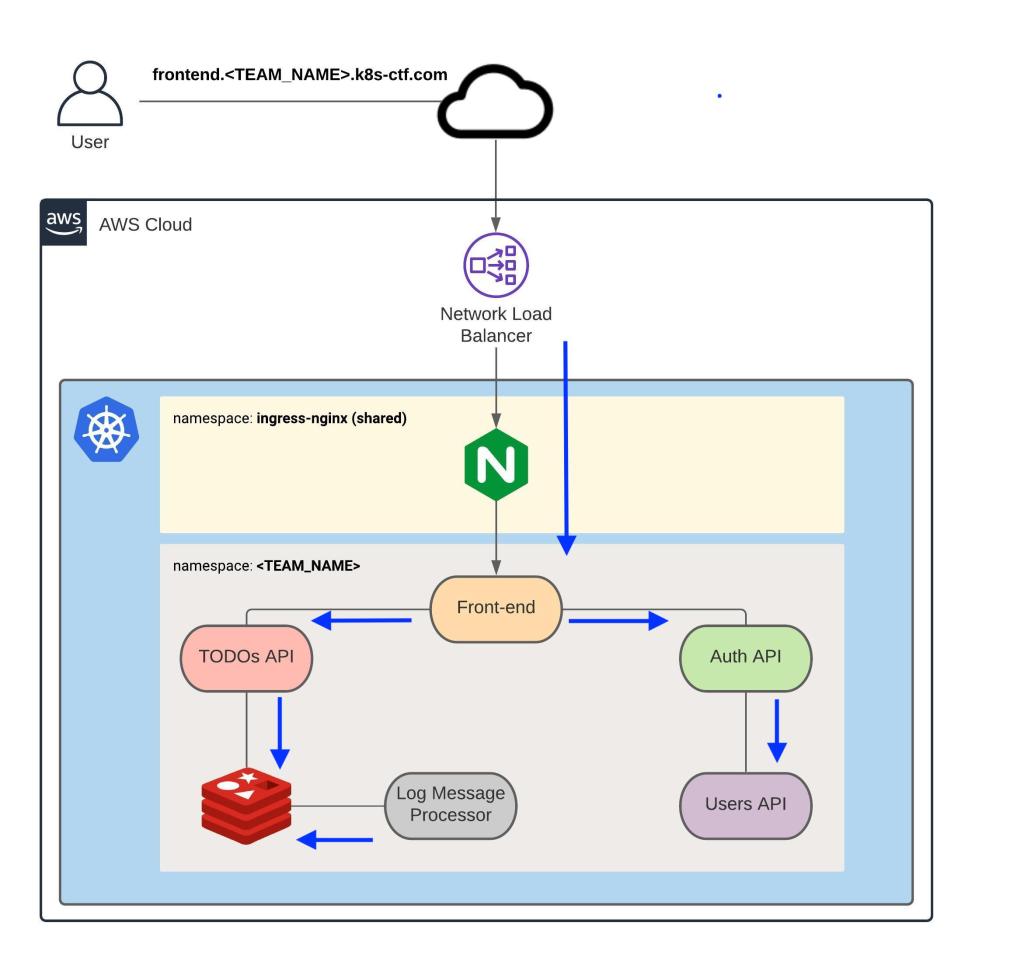

The CTF walks us through the deployment of a TODO application that comprises of 5 microservices. The Kubernetes cluster itself is run in Amazon Elastic Kubernetes Service.

The architecture that we are working on is shown below and consists of:

- A Front-end which is the only public microservice that sits behind a Load Balancer

- Auth API

- TODOs API

- Users API

- Log Message Processor

- Redis

Capture The Flag

The first flag for this step is simply a confirmation that we understand what a CTF flag is.

DevSlopCTF{ok}

Accessing The Cluster (2 Points)

This section offers two ways of accessing the Infrastructure. One of the options is to use AWS Cloud9, which is a cloud-based IDE for writing, running, and debugging code with just a browser. The other option is to use your own local machine to access the environment. I chose to use my local machine.

Configuring The AWS CLI

You are advised to use AWS CLI version 1.18. or later. Let’s double-check that we have the recommended version installed.

$ aws --version

aws-cli/1.18.216 Python/3.8.5 Linux/5.4.0-65-generic botocore/1.19.56

The next step is to configure the AWS profile that matches my team name team4 using the command aws configure --profile <TEAM_NAME>. The Access Key ID and Secret Access Key were provided out of band. I chose to paste them here since they are already invalidated.

$ aws configure --profile team4

AWS Access Key ID [None]: AKIAQXZMQZU3FWERBCN5

AWS Secret Access Key [None]: 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

Default region name [None]: us-east-1

Default output format [None]: json

We are then required to export an environment variable called AWS_PROFILE and set it to the team name provided, team4. A link is provided to help us.

$ export AWS_PROFILE=team4

The next step is to validate that the CLI is properly configured using the command aws sts get-caller-identity --profile <TEAM_NAME>

$ aws sts get-caller-identity --profile team4

{

"UserId": "AIDAQXZMQZU3GGS2XHEJ2",

"Account": "051096112438",

"Arn": "arn:aws:iam::051096112438:user/team4"

}

As expected, we see that the UserId provided differs from the AWS Access Key ID and that the Amazon Resource Name (a.k.a ARN) has our IAM user.

Configuring kubectl

The next task is to generate the kubeconfig file which should be in the $HOME/.kube directory.

We are provided with the command aws eks --region us-east-1 update-kubeconfig --name Kubernetes-ctf-cluster to generate the kubeconfig file.

$ aws eks --region us-east-1 update-kubeconfig --name Kubernetes-ctf-cluster

Added new context arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster to /home/userxx/.kube/config

The command generates the kubeconfig file in the following location /home/userxx/.kube/config. The contents of the file are shown below.

$ cat /home/userxx/.kube/config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN5RENDQWJDZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFWTVJNd0VRWURWUVFERXdwcmRXSmwKY201bGRHVnpNQjRYRFRJeE1ERXlOVEUwTkRjMU1Gb1hEVE14TURFeU16RTBORGMxTUZvd0ZURVRNQkVHQTFVRQpBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDQVFvQ2dnRUJBTXNECmdiSnhnN0kvNE9OanNVbWsvT1pYa0hjRlBPK05XOTRCb0lXbUM4ZDhJNm1FZ2ZDMDlmVmVhR21ORHFGTXkzRUQKejFzb1M0NWQzd096NU00UGNuM0syK29yYXhURmlvakJaOCtWdXp0VWl2akxGNENhZlNUb2R0Vyttc2lCN0s2Vgo3V1Vsd20yNDU0aWFiSkFFOWQ0d3dkZ294RE9BWGk1VEJMNkQ2QWxoMCtTeFhoUGxKT0NDNTh3VjRPMzBzTXh1CnU0UE5nR0xpVVF3N2RNM09YSEtKVEt3TG94M3FzMEppYnpRL3J4V0tnR0dzL3ZKQUNsK0pDWFYrd1lBZE13MysKN3ZteHhaMVVUQ1lScXZUNXU5b1NFZklwUmp4SU05cllSVW9sZWtkNEpCYlFacjNNd2o1Yk42MmpyTlZnUVBKZgpMamZpUWF2MXRHYzZSaERIcmdrQ0F3RUFBYU1qTUNFd0RnWURWUjBQQVFIL0JBUURBZ0trTUE4R0ExVWRFd0VCCi93UUZNQU1CQWY4d0RRWUpLb1pJaHZjTkFRRUxCUUFEZ2dFQkFNamYzRUlYYTEreWhrN1cweUZZKzhISHdKVmUKdCszbjkxeHU2Sm53WjlPalBEcFR3bzBkZGhXQU16b24rZXFRdmwrR2FtT2JsMGwwL0FnRFdqMytoRHpjaEo3TApBbjY3clkxaHB1OGJzUWdLUDdTNDZ4L0RsTHdtaDhwVnc1NTAxVjN5MnUwbVlOZjFWVVAxNDlTVzBmOENZTThOCktlMk0rL3hBbktXN0dHaWZFQTFrZE9ZbGQyekZMUXlkdWdwR0FCVmpid01ZZzJqYk5hTU5WV3Jacld2QktQdlgKVlJpOVM4bE9WUEJWc0FuOXhadGttNmFkQ0kzcVNiS0FsTDFFYzRpeWswZHM4RW1iMmhvazRtOWRBRkRDNHhQegpVVU1sbUViVmt0UmlaaDNkbkV6cDJQcmNHU00vcXh1Rm1tVmR4VEM5eTI5YXY4MkhKYVhnYU91bzl2Yz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://E856E5152BB1C1CC76291D085F3EE7EE.gr7.us-east-1.eks.amazonaws.com

name: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

contexts:

- context:

cluster: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

user: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

name: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

current-context: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

kind: Config

preferences: {}

users:

- name: arn:aws:eks:us-east-1:051096112438:cluster/Kubernetes-ctf-cluster

user:

exec:

apiVersion: client.authentication.k8s.io/v1alpha1

args:

- --region

- us-east-1

- eks

- get-token

- --cluster-name

- Kubernetes-ctf-cluster

command: aws

env:

- name: AWS_PROFILE

value: team4

To test that we have gained access to the cluster, we need to run kubectl get pods -n <TEAM_NAME>.

$ kubectl get pods -n team4

No resources found in team4 namespace.

So far so good. The expected output for this command is indeed No resources found in <TEAM_NAME> namespace.

Cloning The Application Repository

The Application repository is hosted in github. We need to clone the repository to the local computer.

$ git clone git@github.com:thedojoseries/Kubernetes-ctf.git

Cloning into 'Kubernetes-ctf'...

The authenticity of host 'github.com (140.82.121.3)' can't be established.

RSA key fingerprint is SHA256:XXXXXXXXXXXX.

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added 'github.com,140.82.121.3' (RSA) to the list of known hosts.

remote: Enumerating objects: 448, done.

remote: Counting objects: 100% (448/448), done.

remote: Compressing objects: 100% (255/255), done.

remote: Total 448 (delta 240), reused 359 (delta 151), pack-reused 0

Receiving objects: 100% (448/448), 812.27 KiB | 2.03 MiB/s, done.

Resolving deltas: 100% (240/240), done.

The files that we cloned are shown below

$ cd Kubernetes-ctf/

@lsv-u01:~/Downloads/Kubernetes-ctf$ ls

auth-api frontend log-message-processor todos-api users-api

Capture The Flag

This is also a free flag to confirm that everything is setup as expected and that we have access to the cluster.

DevSlopCTF{I am ready}

Building Docker Images

We need to first build the Docker images for each microservice before we can deploy Docker containers to the Kubernetes cluster. There is already a Redis image in the official Docker registry, so that does not need to be built.

Introduction (1 Point)

The Dockerfile in each folder that we downloaded contains instructions used by Docker to build an image.

Let’s verify the contents.

Dockerfile contents for auth-api.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ cat auth-api/Dockerfile

FROM golang:1.9-alpine

EXPOSE 8081

WORKDIR /go/src/app

RUN apk --no-cache add curl git && \

curl https://raw.githubusercontent.com/golang/dep/master/install.sh | sh

COPY . .

RUN dep ensure

RUN go build -o auth-api

CMD /go/src/app/auth-api

Dockerfile contents for frontend.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ cat frontend/Dockerfile

FROM node:8-alpine

EXPOSE 8080

WORKDIR /usr/src/app

COPY package.json ./

RUN npm install

COPY . .

CMD ["sh", "-c", "npm start" ]

Dockerfile contents for log-message-processor.

$ cat log-message-processor/Dockerfile

FROM python:3.6-alpine

WORKDIR /usr/src/app

RUN apk add --no-cache build-base

COPY requirements.txt .

RUN pip3 install -r requirements.txt

COPY main.py .

CMD ["python3","-u","main.py"]

Dockerfile contents for todos-api.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ cat todos-api/Dockerfile

FROM node:8-alpine

EXPOSE 8082

WORKDIR /usr/src/app

COPY package.json ./

RUN npm install

COPY . .

CMD ["sh", "-c", "npm start" ]

Dockerfile contents for users-api.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ cat users-api/Dockerfile

FROM openjdk:8-alpine

EXPOSE 8083

WORKDIR /usr/src/app

COPY pom.xml mvnw ./

COPY .mvn/ ./.mvn

RUN ./mvnw dependency:resolve

COPY . .

RUN ./mvnw install

CMD ["java", "-jar", "./target/users-api-0.0.1-SNAPSHOT.jar"]

Capture The Flag

This flag is simply to validate that we have the required files.

DevSlopCTF{ok}

Front-End (99 Points)

We are instructed to use the docker build command to build the frontend image. A helpful link to docker docs is provided. We see that the usage for this command is docker build [OPTIONS] PATH | URL | -. As we were told to use the Dockerfile. From the help page, we see that can pass a single Dockerfile in the URL or pipe the file in via STDIN using the command $ docker build - < Dockerfile. Let’s try that.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend$ docker build .

Sending build context to Docker daemon 451.6kB

Step 1/7 : FROM node:8-alpine

---> 2b8fcdc6230a

Step 2/7 : EXPOSE 8080

---> Using cache

---> 8ec4ed1e32b7

Step 3/7 : WORKDIR /usr/src/app

---> Using cache

---> 22277587cc80

Step 4/7 : COPY package.json ./

---> e4698944daed

Step 5/7 : RUN npm install

---> Running in 39cde6fe1423

npm WARN deprecated babel-eslint@7.2.3: babel-eslint is now @babel/eslint-parser. This package will no longer receive updates.

npm WARN deprecated eslint-loader@1.9.0: This loader has been deprecated. Please use eslint-webpack-plugin

npm WARN deprecated extract-text-webpack-plugin@2.1.2: Deprecated. Please use https://github.com/webpack-contrib/mini-css-extract-plugin

npm WARN deprecated core-js@2.6.12: core-js@<3 is no longer maintained and not recommended for usage due to the number of issues. Please, upgrade your dependencies to the actual version of core-js@3.

npm WARN deprecated browserslist@2.11.3: Browserslist 2 could fail on reading Browserslist >3.0 config used in other tools.

npm WARN deprecated request@2.88.2: request has been deprecated, see https://github.com/request/request/issues/3142

npm WARN deprecated bfj-node4@5.3.1: Switch to the `bfj` package for fixes and new features!

npm WARN deprecated har-validator@5.1.5: this library is no longer supported

npm WARN deprecated browserslist@1.7.7: Browserslist 2 could fail on reading Browserslist >3.0 config used in other tools.

npm WARN deprecated circular-json@0.3.3: CircularJSON is in maintenance only, flatted is its successor.

npm WARN deprecated chokidar@2.1.8: Chokidar 2 will break on node v14+. Upgrade to chokidar 3 with 15x less dependencies.

npm WARN deprecated fsevents@1.2.13: fsevents 1 will break on node v14+ and could be using insecure binaries. Upgrade to fsevents 2.

npm WARN deprecated urix@0.1.0: Please see https://github.com/lydell/urix#deprecated

npm WARN deprecated resolve-url@0.2.1: https://github.com/lydell/resolve-url#deprecated

> node-sass@4.14.1 install /usr/src/app/node_modules/node-sass

> node scripts/install.js

Downloading binary from https://github.com/sass/node-sass/releases/download/v4.14.1/linux_musl-x64-57_binding.node

Download complete

Binary saved to /usr/src/app/node_modules/node-sass/vendor/linux_musl-x64-57/binding.node

Caching binary to /root/.npm/node-sass/4.14.1/linux_musl-x64-57_binding.node

> core-js@2.6.12 postinstall /usr/src/app/node_modules/core-js

> node -e "try{require('./postinstall')}catch(e){}"

Thank you for using core-js ( https://github.com/zloirock/core-js ) for polyfilling JavaScript standard library!

The project needs your help! Please consider supporting of core-js on Open Collective or Patreon:

> https://opencollective.com/core-js

> https://www.patreon.com/zloirock

Also, the author of core-js ( https://github.com/zloirock ) is looking for a good job -)

> ejs@2.7.4 postinstall /usr/src/app/node_modules/ejs

> node ./postinstall.js

Thank you for installing EJS: built with the Jake JavaScript build tool (https://jakejs.com/)

> node-sass@4.14.1 postinstall /usr/src/app/node_modules/node-sass

> node scripts/build.js

Binary found at /usr/src/app/node_modules/node-sass/vendor/linux_musl-x64-57/binding.node

Testing binary

Binary is fine

npm notice created a lockfile as package-lock.json. You should commit this file.

npm WARN notsup Unsupported engine for js-beautify@1.13.5: wanted: {"node":">=10"} (current: {"node":"8.17.0","npm":"6.13.4"})

npm WARN notsup Not compatible with your version of node/npm: js-beautify@1.13.5

npm WARN notsup Unsupported engine for mkdirp@1.0.4: wanted: {"node":">=10"} (current: {"node":"8.17.0","npm":"6.13.4"})

npm WARN notsup Not compatible with your version of node/npm: mkdirp@1.0.4

npm WARN optional SKIPPING OPTIONAL DEPENDENCY: fsevents@~2.3.1 (node_modules/chokidar/node_modules/fsevents):

npm WARN notsup SKIPPING OPTIONAL DEPENDENCY: Unsupported platform for fsevents@2.3.2: wanted {"os":"darwin","arch":"any"} (current: {"os":"linux","arch":"x64"})

npm WARN optional SKIPPING OPTIONAL DEPENDENCY: fsevents@^1.2.7 (node_modules/watchpack-chokidar2/node_modules/chokidar/node_modules/fsevents):

npm WARN notsup SKIPPING OPTIONAL DEPENDENCY: Unsupported platform for fsevents@1.2.13: wanted {"os":"darwin","arch":"any"} (current: {"os":"linux","arch":"x64"})

added 1290 packages from 743 contributors and audited 1300 packages in 41.668s

11 packages are looking for funding

run `npm fund` for details

found 21 vulnerabilities (4 low, 9 moderate, 8 high)

run `npm audit fix` to fix them, or `npm audit` for details

Removing intermediate container 39cde6fe1423

---> deaa51f7a47b

Step 6/7 : COPY . .

---> 61aa7ed8eb7b

Step 7/7 : CMD ["sh", "-c", "npm start" ]

---> Running in 74f2881dbea3

Removing intermediate container 74f2881dbea3

---> e019f9b4bc2d

Successfully built e019f9b4bc2d

Capture The Flag

We learn that the front-end uses a Package Manager to install dependencies. The flag is the name of the package manager. From the helpful link, we know that A package manager is a collection of software tools that automates the process of installing, upgrading, configuring, and removing programs for a system in a consistent manner.

From the output above, we see that the packet manager being used is npm from the command RUN npm install.

DevSlopCTF{npm}

TODOs API (99 Points)

Next, we are advised to run docker build to build an image for the TODOs API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend$ cd ../todos-api/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api$ docker build .

Sending build context to Docker daemon 96.26kB

Step 1/7 : FROM node:8-alpine

---> 2b8fcdc6230a

Step 2/7 : EXPOSE 8082

---> Running in bad4d09b9d98

Removing intermediate container bad4d09b9d98

---> e0cef1fb5fbc

Step 3/7 : WORKDIR /usr/src/app

---> Running in d6748de2aa04

Removing intermediate container d6748de2aa04

---> 5f27504f78a0

Step 4/7 : COPY package.json ./

---> 09eb14a41424

Step 5/7 : RUN npm install

---> Running in 339b58d32b3b

npm WARN deprecated chokidar@2.1.8: Chokidar 2 will break on node v14+. Upgrade to chokidar 3 with 15x less dependencies.

npm WARN deprecated fsevents@1.2.13: fsevents 1 will break on node v14+ and could be using insecure binaries. Upgrade to fsevents 2.

npm WARN deprecated resolve-url@0.2.1: https://github.com/lydell/resolve-url#deprecated

npm WARN deprecated urix@0.1.0: Please see https://github.com/lydell/urix#deprecated

> nodemon@1.19.4 postinstall /usr/src/app/node_modules/nodemon

> node bin/postinstall || exit 0

Love nodemon? You can now support the project via the open collective:

> https://opencollective.com/nodemon/donate

npm notice created a lockfile as package-lock.json. You should commit this file.

npm WARN optional SKIPPING OPTIONAL DEPENDENCY: fsevents@^1.2.7 (node_modules/chokidar/node_modules/fsevents):

npm WARN notsup SKIPPING OPTIONAL DEPENDENCY: Unsupported platform for fsevents@1.2.13: wanted {"os":"darwin","arch":"any"} (current: {"os":"linux","arch":"x64"})

npm WARN zipkin-instrumentation-express@0.11.2 requires a peer of @types/express@^4.0.39 but none is installed. You must install peer dependencies yourself.

npm WARN todos-api@1.0.0 No repository field.

added 304 packages from 194 contributors and audited 305 packages in 9.814s

found 1 low severity vulnerability

run `npm audit fix` to fix them, or `npm audit` for details

Removing intermediate container 339b58d32b3b

---> dc9b43ab2a1d

Step 6/7 : COPY . .

---> e595beed5e74

Step 7/7 : CMD ["sh", "-c", "npm start" ]

---> Running in d25ef931c0f1

Removing intermediate container d25ef931c0f1

---> 19213d5f426d

Successfully built 19213d5f426d

Capture The Flag

The TODOs API also uses a Package Manager to install dependencies. The flag is the name of the package manager. We see that it uses the same packet manager as the front-end from the Step 5/7 : RUN npm install command.

DevSlopCTF{npm}

Users API (99 Points)

The same command docker build is used to build an image for the Users API. I have truncated the output as it was really noisy.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api$ cd ../users-api/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ docker build .

Sending build context to Docker daemon 94.72kB

Step 1/9 : FROM openjdk:8-alpine

8-alpine: Pulling from library/openjdk

e7c96db7181b: Pull complete

f910a506b6cb: Pull complete

c2274a1a0e27: Pull complete

Digest: sha256:94792824df2df33402f201713f932b58cb9de94a0cd524164a0f2283343547b3

Status: Downloaded newer image for openjdk:8-alpine

---> a3562aa0b991

Step 2/9 : EXPOSE 8083

---> Running in 3442a739a636

Removing intermediate container 3442a739a636

---> 7b932e811074

Step 3/9 : WORKDIR /usr/src/app

---> Running in eb208f26841b

Removing intermediate container eb208f26841b

---> 26b97c1c461c

Step 4/9 : COPY pom.xml mvnw ./

---> e019cc1d31aa

Step 5/9 : COPY .mvn/ ./.mvn

---> 971689fe5512

Step 6/9 : RUN ./mvnw dependency:resolve

---> Running in ad8a0b42772f

/usr/src/app

Downloading https://repo1.maven.org/maven2/org/apache/maven/apache-maven/3.5.0/apache-maven-3.5.0-bin.zip

..................................................................................................................................................................................................................................................................................................................................................................................................................................................

Unzipping /root/.m2/wrapper/dists/apache-maven-3.5.0-bin/6ps54u5pnnbbpr6ds9rppcc7iv/apache-maven-3.5.0-bin.zip to /root/.m2/wrapper/dists/apache-maven-3.5.0-bin/6ps54u5pnnbbpr6ds9rppcc7iv

Set executable permissions for: /root/.m2/wrapper/dists/apache-maven-3.5.0-bin/6ps54u5pnnbbpr6ds9rppcc7iv/apache-maven-3.5.0/bin/mvn

// TRUNCATED

Downloaded: https://repo.maven.apache.org/maven2/commons-collections/commons-collections/3.2.1/commons-collections-3.2.1.jar (575 kB at 608 kB/s)

[INFO]

[INFO] The following files have been resolved:

[INFO] com.fasterxml.jackson.core:jackson-annotations:jar:2.8.0:compile

// TRUNCATED

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 28.407 s

[INFO] Finished at: 2021-02-14T21:02:51Z

[INFO] Final Memory: 27M/236M

[INFO] ------------------------------------------------------------------------

Removing intermediate container ad8a0b42772f

---> d7cf468bcca3

Step 7/9 : COPY . .

---> c71fe75c9922

Step 8/9 : RUN ./mvnw install

---> Running in 7b72ff956697

/usr/src/app

[INFO] Scanning for projects...

[INFO]

[INFO] ------------------------------------------------------------------------

[INFO] Building users-api 0.0.1-SNAPSHOT

[INFO] ------------------------------------------------------------------------

[INFO]

[INFO] --- maven-resources-plugin:2.6:resources (default-resources) @ users-api ---

Downloading: https://repo.maven.apache.org/maven2/org/apache/maven/maven-project/2.0.6/maven-project-2.0.6.pom

// TRUNCATED

Downloaded: https://repo.maven.apache.org/maven2/com/google/collections/google-collections/1.0/google-collections-1.0.jar (640 kB at 1.6 MB/s)

[INFO] Changes detected - recompiling the module!

[INFO] Compiling 8 source files to /usr/src/app/target/classes

[INFO]

[INFO] --- maven-resources-plugin:2.6:testResources (default-testResources) @ users-api ---

[INFO] Using 'UTF-8' encoding to copy filtered resources.

[INFO] skip non existing resourceDirectory /usr/src/app/src/test/resources

[INFO]

[INFO] --- maven-compiler-plugin:3.1:testCompile (default-testCompile) @ users-api ---

[INFO] Changes detected - recompiling the module!

[INFO] Compiling 1 source file to /usr/src/app/target/test-classes

[INFO]

[INFO] --- maven-surefire-plugin:2.18.1:test (default-test) @ users-api ---

// TRUNCATED

[INFO] Installing /usr/src/app/target/users-api-0.0.1-SNAPSHOT.jar to /root/.m2/repository/com/elgris/users-api/0.0.1-SNAPSHOT/users-api-0.0.1-SNAPSHOT.jar

[INFO] Installing /usr/src/app/pom.xml to /root/.m2/repository/com/elgris/users-api/0.0.1-SNAPSHOT/users-api-0.0.1-SNAPSHOT.pom

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 22.458 s

[INFO] Finished at: 2021-02-14T21:03:16Z

[INFO] Final Memory: 37M/348M

[INFO] ------------------------------------------------------------------------

Removing intermediate container 7b72ff956697

---> 8d26fff0f4da

Step 9/9 : CMD ["java", "-jar", "./target/users-api-0.0.1-SNAPSHOT.jar"]

---> Running in 1f29cef84675

Removing intermediate container 1f29cef84675

---> 650b405ecff6

Successfully built 650b405ecff6

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$

Capture The Flag

The flag is the name of the package manager. From the command Step 8/9 : RUN ./mvnw install and from the noisy output, we seem to be installing something to do with maven. I personally have noted used this package manager so google to the rescue. Apache Maven can manage a project’s build, reporting and documentation. We got our flag.

DevSlopCTF{maven}

Log Message Processor (99 Points)

Next, we build the image for the Log Message Processor.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ cd ../log-message-processor/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor$ docker build .

Sending build context to Docker daemon 7.168kB

Step 1/7 : FROM python:3.6-alpine

3.6-alpine: Pulling from library/python

4c0d98bf9879: Pull complete

5e807dbff582: Pull complete

1cf32de05765: Pull complete

5818ae83b301: Pull complete

0d4c65e1344c: Pull complete

Digest: sha256:4aae963dcacd3086dfa8d82a5f123691b893d05064280877c83f3dbf609efd61

Status: Downloaded newer image for python:3.6-alpine

---> d39b82549c6d

Step 2/7 : WORKDIR /usr/src/app

---> Running in 235f0c1d8727

Removing intermediate container 235f0c1d8727

---> 1af3701dfc8f

Step 3/7 : RUN apk add --no-cache build-base

---> Running in 3ce63ebb530a

fetch https://dl-cdn.alpinelinux.org/alpine/v3.13/main/x86_64/APKINDEX.tar.gz

fetch https://dl-cdn.alpinelinux.org/alpine/v3.13/community/x86_64/APKINDEX.tar.gz

(1/20) Installing libgcc (10.2.1_pre1-r3)

(2/20) Installing libstdc++ (10.2.1_pre1-r3)

//TRUNCATED

(20/20) Installing build-base (0.5-r2)

Executing busybox-1.32.1-r2.trigger

OK: 201 MiB in 54 packages

Removing intermediate container 3ce63ebb530a

---> f9ffb9ea858f

Step 4/7 : COPY requirements.txt .

---> baf254a2ecae

Step 5/7 : RUN pip3 install -r requirements.txt

---> Running in 17dff05555ba

Collecting redis==2.10.6

Downloading redis-2.10.6-py2.py3-none-any.whl (64 kB)

//TRUNCATED

Building wheels for collected packages: thriftpy

Building wheel for thriftpy (setup.py): started

Building wheel for thriftpy (setup.py): finished with status 'done'

Created wheel for thriftpy: filename=thriftpy-0.3.9-cp36-cp36m-linux_x86_64.whl size=168220 sha256=9b7bab4aba79f01386282b816f575d239c348163faa69c00acb914e5a86baf3d

Stored in directory: /root/.cache/pip/wheels/5b/5c/97/4f89b14ea7db3aa07d6c3b6d13459671f7a2dcdf57ccfcf00c

Successfully built thriftpy

Installing collected packages: ply, urllib3, thriftpy, six, idna, chardet, certifi, requests, redis, py-zipkin

Successfully installed certifi-2020.12.5 chardet-4.0.0 idna-2.10 ply-3.11 py-zipkin-0.11.0 redis-2.10.6 requests-2.25.1 six-1.15.0 thriftpy-0.3.9 urllib3-1.26.3

Removing intermediate container 17dff05555ba

---> c495d4c464a8

Step 6/7 : COPY main.py .

---> abd87d5c6e6f

Step 7/7 : CMD ["python3","-u","main.py"]

---> Running in 6601df6950f1

Removing intermediate container 6601df6950f1

---> 4144a6eeb4fa

Successfully built 4144a6eeb4fa

Capture The Flag

The flag is the name of the package manager. The command Step 5/7 : RUN pip3 install -r requirements.txt points to us using pip3. pip3 is the package installer for Python.

DevSlopCTF{pip3}

Auth API (103 Points)

The last docker image that we need to build is that for AUTH API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor$ cd ../auth-api/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api$ docker build .

Sending build context to Docker daemon 18.43kB

Step 1/8 : FROM golang:1.9-alpine

1.9-alpine: Pulling from library/golang

8e3ba11ec2a2: Pull complete

8e6b2bc60854: Pull complete

3d20cafe6dc8: Pull complete

533d243d9519: Pull complete

61a3cf7df0db: Pull complete

ec4d1222aabd: Pull complete

a2fb1cdee015: Pull complete

Digest: sha256:220aaadccc956ab874ff9744209e5a756d7a32bcffede14d08589c2c54801ce0

Status: Downloaded newer image for golang:1.9-alpine

---> b0260be938c6

Step 2/8 : EXPOSE 8081

---> Running in efe61b4658d8

Removing intermediate container efe61b4658d8

---> 5498be9c150e

Step 3/8 : WORKDIR /go/src/app

---> Running in a363f5547db7

Removing intermediate container a363f5547db7

---> f228eb4af2ec

Step 4/8 : RUN apk --no-cache add curl git && curl https://raw.githubusercontent.com/golang/dep/master/install.sh | sh

---> Running in a129d6d58995

fetch http://dl-cdn.alpinelinux.org/alpine/v3.8/main/x86_64/APKINDEX.tar.gz

fetch http://dl-cdn.alpinelinux.org/alpine/v3.8/community/x86_64/APKINDEX.tar.gz

(1/7) Installing nghttp2-libs (1.39.2-r0)

(2/7) Installing libssh2 (1.9.0-r1)

(3/7) Installing libcurl (7.61.1-r3)

(4/7) Installing curl (7.61.1-r3)

(5/7) Installing expat (2.2.8-r0)

(6/7) Installing pcre2 (10.31-r0)

(7/7) Installing git (2.18.4-r0)

Executing busybox-1.28.4-r0.trigger

OK: 19 MiB in 21 packages

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 5230 100 5230 0 0 21004 0 --:--:-- --:--:-- --:--:-- 20920

ARCH = amd64

OS = linux

Will install into /go/bin

Fetching https://github.com/golang/dep/releases/latest..

Release Tag = v0.5.4

Fetching https://github.com/golang/dep/releases/tag/v0.5.4..

Fetching https://github.com/golang/dep/releases/download/v0.5.4/dep-linux-amd64..

Setting executable permissions.

Moving executable to /go/bin/dep

Removing intermediate container a129d6d58995

---> 469a6587de37

Step 5/8 : COPY . .

---> a74b96319369

Step 6/8 : RUN dep ensure

---> Running in 01e855f4dde5

Removing intermediate container 01e855f4dde5

---> a39658c30f4e

Step 7/8 : RUN go build -o auth-api

---> Running in 2eb831f70dd2

Removing intermediate container 2eb831f70dd2

---> 7fec3c87687f

Step 8/8 : CMD /go/src/app/auth-api

---> Running in 80450c71e552

Removing intermediate container 80450c71e552

---> 9b51cc1cddd1

Successfully built 9b51cc1cddd1

Capture The Flag

The flag is the name of the package manager. From the command Step 6/8 : RUN dep ensure, we see that dep is used. dep is the “official experiment” dependency management tool for the go programming language. We got our flag.

DevSlopCTF{dep}

Pushing Docker Images to Amazon ECR Repositories

The next step will be pushing the docker images to a Amazon Elastic Container Registry (ECR) which is a remote Docker repository.

Introduction (5 Point)

The repositories for each microservice have already been created for us. My repos are:

team4-auth-api

team4-frontend

team4-log-message-processor

team4-todos-api

team4-users-api

Inorder to push the images to the repo, we need to login using the Docker CLI.

Capture The Flag

We need to submit an ok flag confirming that we are all set.

DevSlopCTF{ok}

Front-End (199 Points)

We are advised to google how to push Docker images to AWS ECR. From the Amazon link I found, this happens in a couple of steps:

- Authenticate your Docker client to the Amazon ECR registry to which you intend to push your image. I followed the instructions linked here with

get-login.

The output that we saw previously comes in handy as we need to get our aws account id

$ aws sts get-caller-identity --profile team4

{

"UserId": "AIDAQXZMQZU3GGS2XHEJ2",

"Account": "051096112438",

"Arn": "arn:aws:iam::051096112438:user/team4"

}

We will use the get-login command is available to authenticate to your Amazon ECR registry.

$ aws ecr get-login --region us-east-1 --no-include-email

docker login -u AWS -p eyJwYXlsb2FkIjoia0ZkK096YXBEL2pOK1BERkhkalBDbWRSeHFvVHZTUXgyOVdBdUp0czF0Z1RsN1NTMEVsWTlWQXA1d0gxajBta0pJRmxDNit3UGNBTTcvS3Uxd1BGdXVPWlF6emZ4c3hMdDVxRzBKWjFLN3VOTDFuV01SN0xmYXpPL1d1THczSlo1Wm1CMWJ4MUducU0xRDJnZ1FJWEtWbmRoTEtTSWFnOU1Tb0tpQk9Md3VOSWkyZUFoV3BOWUg3cUVHZzd1UEE2NGlHUzI0ZXJTWHNZM2dNY1J3ZlZNNnFrREloRE9ySVRQUTlkclBQRkxHYm9KUUx0bE1kTjlFVlUwd1NrY0dsK3FBa2dIZmJhYU1QN3dQaWZUeUdOdVlzamxhdkQ3U2JVbEVaVGZiVGdlNVZqcmpYKzRreG9aOHVYM0NhN1h3WVhFMUN2YnlkdGJjaE9iTmFZSVdHdnozZ0ZoMm9GRlYyQTVCazFFSTQ1b2t5aVNFU0ora3FNWHMwWFZtODU3Y0U0TkZzMFhhVVhnbS9LRnlRTlp2TzlUOFdoK2dvcHQ5YmJSWkRQTlRHN2ZGbTV0UFRtOGJDRHZFejdLRzBGMmh3ZE9HSmhwL2cyQzRKb3lZYXFyKzZDa2dqNVhmZEtHMnptd2pxU3lmTmRURzJQVFNJdTdGSVcreXBQMWNYeHA4Um1KWXZMN2tmazZLQ2pCRkxXbTlpZzBJallWVkswM1gxMXR0ZWRQclFCdHo3WFdINER3RzlPNDVrRngrVFpFREFUM2QyNEsxMVh2a2VmU2VwS2xFbVN1TUUwNHR2THJMVDlzcngwUS84aWU0bFdqSzQ0Ni8rMWRXTHFFVERHRURDU090c05nVjBUUVMrWUxkWWpEbUtjSGRyVE05R0h2dmNzRElsanJ3aTRkdS9YU3FyMHk1b2hZNm1SN01qbHRINWNFY1lWaldQeEtSeWJXSWtxMkx6WVVjNnZyakhnOUFkcllhVHZLblRzOFRqQkxNQ3AzZFdDdStsSE42bU1LK1hyMG9hRUUrRzVMajhrOWpSaGtiYjEwZ3hRWWl1WHhZK3FMd0wvenNGckc0UHVtbTRNQUJwSmd5b0NLYUxpTyttblN0bXNqZ0R1QjhhdGQ2KytZUWwxdE9USkJlcXVtTjl1WnBVVHRzSEpXVEN5Q0ZHbEhoNGRxVDQzWWlNK0RWSmxWdGFwYVZyMTRjblJkRmdOT1VkNzlkbTlESlhtYlhPRzdib0tJZ2IxU1ZJSHg5dWpWTnllbWt4d3JsMDA5YkVmRFNzdEVObjc5SlR0aEdacGFrWWN0QjNmMUJWM0ZCbHVDL2I3TDFVbnRMYUhPaW9QTlplYVFWTWZxNFZkUTlWNGhnbUJjaHJIOUZyYURIaExSWUtvZ1VRay9zQVVaUjVaZ3VxMjB5dUFDTWxJcjlzZ1JYenY4ajZrWTZUZVh1aWtKMFNzaVFDd3IzRGQiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFRENPb2M0QmRvbzNDdFVYd05BSUJFSUE3T1VBaTlSYkY5UHI0ZWFFTW9PQW5kQ1Zvd21aTVJJUmNUaTJLWEpCeG82ZVYyWEZiY0l3WG93Z2hLUmdhRGxSazN6Z0pSSmYzbHFwSm9iMD0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTMzODkyNjd9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

The resulting output is a docker login command that you use to authenticate your Docker client to your Amazon ECR registry. Copy and paste the docker login command into a terminal to authenticate your Docker CLI to the registry. This command provides an authorization token that is valid for the specified registry for 12 hours.

$ docker login -u AWS -p eyJwYXlsb2FkIjoia0ZkK096YXBEL2pOK1BERkhkalBDbWRSeHFvVHZTUXgyOVdBdUp0czF0Z1RsN1NTMEVsWTlWQXA1d0gxajBta0pJRmxDNit3UGNBTTcvS3Uxd1BGdXVPWlF6emZ4c3hMdDVxRzBKWjFLN3VOTDFuV01SN0xmYXpPL1d1THczSlo1Wm1CMWJ4MUducU0xRDJnZ1FJWEtWbmRoTEtTSWFnOU1Tb0tpQk9Md3VOSWkyZUFoV3BOWUg3cUVHZzd1UEE2NGlHUzI0ZXJTWHNZM2dNY1J3ZlZNNnFrREloRE9ySVRQUTlkclBQRkxHYm9KUUx0bE1kTjlFVlUwd1NrY0dsK3FBa2dIZmJhYU1QN3dQaWZUeUdOdVlzamxhdkQ3U2JVbEVaVGZiVGdlNVZqcmpYKzRreG9aOHVYM0NhN1h3WVhFMUN2YnlkdGJjaE9iTmFZSVdHdnozZ0ZoMm9GRlYyQTVCazFFSTQ1b2t5aVNFU0ora3FNWHMwWFZtODU3Y0U0TkZzMFhhVVhnbS9LRnlRTlp2TzlUOFdoK2dvcHQ5YmJSWkRQTlRHN2ZGbTV0UFRtOGJDRHZFejdLRzBGMmh3ZE9HSmhwL2cyQzRKb3lZYXFyKzZDa2dqNVhmZEtHMnptd2pxU3lmTmRURzJQVFNJdTdGSVcreXBQMWNYeHA4Um1KWXZMN2tmazZLQ2pCRkxXbTlpZzBJallWVkswM1gxMXR0ZWRQclFCdHo3WFdINER3RzlPNDVrRngrVFpFREFUM2QyNEsxMVh2a2VmU2VwS2xFbVN1TUUwNHR2THJMVDlzcngwUS84aWU0bFdqSzQ0Ni8rMWRXTHFFVERHRURDU090c05nVjBUUVMrWUxkWWpEbUtjSGRyVE05R0h2dmNzRElsanJ3aTRkdS9YU3FyMHk1b2hZNm1SN01qbHRINWNFY1lWaldQeEtSeWJXSWtxMkx6WVVjNnZyakhnOUFkcllhVHZLblRzOFRqQkxNQ3AzZFdDdStsSE42bU1LK1hyMG9hRUUrRzVMajhrOWpSaGtiYjEwZ3hRWWl1WHhZK3FMd0wvenNGckc0UHVtbTRNQUJwSmd5b0NLYUxpTyttblN0bXNqZ0R1QjhhdGQ2KytZUWwxdE9USkJlcXVtTjl1WnBVVHRzSEpXVEN5Q0ZHbEhoNGRxVDQzWWlNK0RWSmxWdGFwYVZyMTRjblJkRmdOT1VkNzlkbTlESlhtYlhPRzdib0tJZ2IxU1ZJSHg5dWpWTnllbWt4d3JsMDA5YkVmRFNzdEVObjc5SlR0aEdacGFrWWN0QjNmMUJWM0ZCbHVDL2I3TDFVbnRMYUhPaW9QTlplYVFWTWZxNFZkUTlWNGhnbUJjaHJIOUZyYURIaExSWUtvZ1VRay9zQVVaUjVaZ3VxMjB5dUFDTWxJcjlzZ1JYenY4ajZrWTZUZVh1aWtKMFNzaVFDd3IzRGQiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFRENPb2M0QmRvbzNDdFVYd05BSUJFSUE3T1VBaTlSYkY5UHI0ZWFFTW9PQW5kQ1Zvd21aTVJJUmNUaTJLWEpCeG82ZVYyWEZiY0l3WG93Z2hLUmdhRGxSazN6Z0pSSmYzbHFwSm9iMD0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTMzODkyNjd9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

WARNING! Using --password via the CLI is insecure. Use --password-stdin.

WARNING! Your password will be stored unencrypted in /home/userxx/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

- Identify the image to push. Run the docker images command to list the images on your system.

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

<none> <none> 9b51cc1cddd1 2 hours ago 368MB

<none> <none> 4144a6eeb4fa 2 hours ago 247MB

<none> <none> 650b405ecff6 3 hours ago 267MB

<none> <none> 19213d5f426d 3 hours ago 87.9MB

<none> <none> e019f9b4bc2d 3 hours ago 255MB

python 3.6-alpine d39b82549c6d 10 days ago 40.7MB

node 8-alpine 2b8fcdc6230a 13 months ago 73.5MB

openjdk 8-alpine a3562aa0b991 21 months ago 105MB

golang 1.9-alpine b0260be938c6 2 years ago 240MB

From the output, we only see the image IDs. From building the frontend, we had the following output therefore, I know that the image I need is e019f9b4bc2d.

Step 7/7 : CMD ["sh", "-c", "npm start" ]

---> Running in 74f2881dbea3

Removing intermediate container 74f2881dbea3

---> e019f9b4bc2d

Successfully built e019f9b4bc2d

- Tag your image with the Amazon ECR registry, repository, and optional image tag name combination to use.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ docker tag e019f9b4bc2d 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend:frontend

- Push the image using the docker push command.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf$ docker push 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend:frontend

The push refers to repository [051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend]

9435ddc22098: Pushed

da0867928195: Pushed

8d9458bbe754: Pushed

1eca433c501f: Pushed

6b73202914ee: Pushed

9851b6482f16: Pushed

540b4f5b2c41: Pushed

6b27de954cca: Pushed

frontend: digest: sha256:3e06e5b1f65af67f911f26ecb9bc5b86f094b0eda6e414d9e58c5d4271a40fc6 size: 1994

The output above contains information about the image that was just pushed, including the digest of the image (a SHA-256 Hash).

Capture The Flag

We are expected to count the number of characters in the Hash 3e06e5b1f65af67f911f26ecb9bc5b86f094b0eda6e414d9e58c5d4271a40fc6. There are 64 characters. The flag will be the number of characters in binary.

DevSlopCTF{1000000}

Auth API (199 Points)

Let’s follow the same procedure to push the Auth API Image to AWS ECR.

- Our first step is to login.

userxx@lsv-u01:~$ aws ecr get-login --region us-east-1 --no-include-email

docker login -u AWS -p eyJwYXlsb2FkIjoiYU16QnErazlVeC80RFdCNDNtSFFYMGduQThxV2d0QTB6c093cDF6T1JLY3V3dVVoZ2xVcmFpU2xranNOWHZMb21QUDExVG1zMTdVbmVzWWRDTTZJUng4MGlyM2hQRHIxcFdlZURBOEkzd3ZTR3ZORGRlOTBuWUx3SFBPMzVvL2cweU9KWC9jRzl2b3k3VkhrOWdkKzErVklGVHFwTHE4RXBRMnBteHZYbnVDMnBkemFlK1hUeGJSWkdWNnZBSGtmQW9xNm1vMnNLREFPc2NGakRZVzVGMWRZOFdybjVmcGRwekVTd0U3RnlUM2U5YlpqOEpUcW5UWndkZHFXeUY4Zm1KK0NKQkpMMmdVVzdwYTZLUDNkTXdrdXpuNEg5dUhkaDhoSGs1OCt5eVpqcHduaEQvRHF3TzJveFMwN2xtS1dPbTkxc0I5U0RlckswQ2NrTlpDZURlSHlXVnZDOE5tS3MxbnhhRlJDejFjSXRiTTZKMHU3OUp1WUorSURXUVVyejVoTzlYcHhQTmxka1FNYlZiWHBVSy9waE1wT0JvQXFZeFlUbmFTMWRud1hCVGoxb3hYSWdRTzFMWFIzR1hyVTlHcFZ0N09wYWw2WDcvOEZ2bjk1TDFzSFZwV1NMWGdna24xMzFCWU5sSkpaQ2hKTkUrOGxCcks5MUZ0Mjg2dUNYbExuQkZLOVRRM0tOM1k4OTdHeC9qREo0dUpOWTF3d3pUY3pGRkJtaGZrbnNoQU9pTXgzRUFud1kvMWhucHZRWmo2VzVOODlHdzNFem4xZWNncUp4ZmdFakxDdmdRdkllZVB2L3NuRUpXR1VzVE1LY05lWkdTbWtXY3U5RTdCaEFzUjFZQ1VGclNTUTh6c04xbzloQ3REN1hvdXgrVWhXaDZtbDZKaXNWL2dSOE5uZTl0S0lUcGVEMkdaZjJOSHoyeXRHQk9Sbm85NDUrWHdkVTkyVzU3SUV0dEY5UkhKQlBnNWtUQXlRQmFLRkk5SlVCWlFJdDFYTGgzSEQwWE10QkVJemVzeTdPMEVqRU9iS09JdjVQRHRMbzBXdG5vM0lzb0Fxb2MyN2VPMG5oYmt0M1pwZUQ0RDhZaDViUkt2WGZmSHpXRXk5V0lvZE1lSW5xSWZCWW16eFNaYUc5V2hwQTd1dVRmb3pXcnY2WVpkemVkUmRNNzBsemxzaHA1blBDYTdiMEtBQTJEVENqZWV0UkYxWE5vdUlvMHBFM0VPTDN1MlkwV01uSDQzbGVxeHZoRUVMS293eGhtd2FKeWtKSkFoTXZHLzlRRU9PQ0NaRTVCZDZMenB5MDF2YnlXM21wMi9HQkpwMGNwMDAzZFo3Wk9nUEQ5eCtiUHZmUTVRUzBMYlFrOGE2T2c1YUhSYitnMkZBU2FzcThEbUNETWlTNHJBRG5NNkJXVVJhbVF4UXNGeWNKZU5KWDhnSFJNc3NjcUxRcS92Mnc2U1EiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFRE5yUnpEWWxuYU84SmIrakd3SUJFSUE3UVYxMExXMm56OWs5U0d4bkRrSkxGejgvTGgwQ0MrUytmcDIwNFhtMUQyM2l0Y3dKbGNJM1doeDdMTTUvVW1tUnFrMk4xV25sQkM0QmRDMD0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTM1NTY3ODB9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

The resulting output is a docker login command that we use to authenticate our Docker client to the Amazon ECR registry.

userxx@lsv-u01:~$ docker login -u AWS -p eyJwYXlsb2FkIjoiYU16QnErazlVeC80RFdCNDNtSFFYMGduQThxV2d0QTB6c093cDF6T1JLY3V3dVVoZ2xVcmFpU2xranNOWHZMb21QUDExVG1zMTdVbmVzWWRDTTZJUng4MGlyM2hQRHIxcFdlZURBOEkzd3ZTR3ZORGRlOTBuWUx3SFBPMzVvL2cweU9KWC9jRzl2b3k3VkhrOWdkKzErVklGVHFwTHE4RXBRMnBteHZYbnVDMnBkemFlK1hUeGJSWkdWNnZBSGtmQW9xNm1vMnNLREFPc2NGakRZVzVGMWRZOFdybjVmcGRwekVTd0U3RnlUM2U5YlpqOEpUcW5UWndkZHFXeUY4Zm1KK0NKQkpMMmdVVzdwYTZLUDNkTXdrdXpuNEg5dUhkaDhoSGs1OCt5eVpqcHduaEQvRHF3TzJveFMwN2xtS1dPbTkxc0I5U0RlckswQ2NrTlpDZURlSHlXVnZDOE5tS3MxbnhhRlJDejFjSXRiTTZKMHU3OUp1WUorSURXUVVyejVoTzlYcHhQTmxka1FNYlZiWHBVSy9waE1wT0JvQXFZeFlUbmFTMWRud1hCVGoxb3hYSWdRTzFMWFIzR1hyVTlHcFZ0N09wYWw2WDcvOEZ2bjk1TDFzSFZwV1NMWGdna24xMzFCWU5sSkpaQ2hKTkUrOGxCcks5MUZ0Mjg2dUNYbExuQkZLOVRRM0tOM1k4OTdHeC9qREo0dUpOWTF3d3pUY3pGRkJtaGZrbnNoQU9pTXgzRUFud1kvMWhucHZRWmo2VzVOODlHdzNFem4xZWNncUp4ZmdFakxDdmdRdkllZVB2L3NuRUpXR1VzVE1LY05lWkdTbWtXY3U5RTdCaEFzUjFZQ1VGclNTUTh6c04xbzloQ3REN1hvdXgrVWhXaDZtbDZKaXNWL2dSOE5uZTl0S0lUcGVEMkdaZjJOSHoyeXRHQk9Sbm85NDUrWHdkVTkyVzU3SUV0dEY5UkhKQlBnNWtUQXlRQmFLRkk5SlVCWlFJdDFYTGgzSEQwWE10QkVJemVzeTdPMEVqRU9iS09JdjVQRHRMbzBXdG5vM0lzb0Fxb2MyN2VPMG5oYmt0M1pwZUQ0RDhZaDViUkt2WGZmSHpXRXk5V0lvZE1lSW5xSWZCWW16eFNaYUc5V2hwQTd1dVRmb3pXcnY2WVpkemVkUmRNNzBsemxzaHA1blBDYTdiMEtBQTJEVENqZWV0UkYxWE5vdUlvMHBFM0VPTDN1MlkwV01uSDQzbGVxeHZoRUVMS293eGhtd2FKeWtKSkFoTXZHLzlRRU9PQ0NaRTVCZDZMenB5MDF2YnlXM21wMi9HQkpwMGNwMDAzZFo3Wk9nUEQ5eCtiUHZmUTVRUzBMYlFrOGE2T2c1YUhSYitnMkZBU2FzcThEbUNETWlTNHJBRG5NNkJXVVJhbVF4UXNGeWNKZU5KWDhnSFJNc3NjcUxRcS92Mnc2U1EiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFRE5yUnpEWWxuYU84SmIrakd3SUJFSUE3UVYxMExXMm56OWs5U0d4bkRrSkxGejgvTGgwQ0MrUytmcDIwNFhtMUQyM2l0Y3dKbGNJM1doeDdMTTUvVW1tUnFrMk4xV25sQkM0QmRDMD0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTM1NTY3ODB9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

WARNING! Using --password via the CLI is insecure. Use --password-stdin.

WARNING! Your password will be stored unencrypted in /home/userxx/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

- We need to Identify the image to push from the list of images in our system. In our case, the image is

9b51cc1cddd1based on the output of ourdocker buildcommand.

Step 8/8 : CMD /go/src/app/auth-api

---> Running in 80450c71e552

Removing intermediate container 80450c71e552

---> 9b51cc1cddd1

Successfully built 9b51cc1cddd1

- Tag the image with the Amazon ECR registry, repository, and optional image tag name combination to use.

userxx@lsv-u01:~$ $ docker tag 9b51cc1cddd1 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-auth-api:authapi

- Next, push the image using the docker push command. The folder we are pushing to is

team4-auth-api.

userxx@lsv-u01:~$ docker push 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-auth-api:authapi

The push refers to repository [051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-auth-api]

ad45f0dd141c: Pushed

7573babcaed4: Pushed

8bd6a8085cd6: Pushed

8e5bc3865c32: Pushed

14aff4165e19: Pushed

9ae8db0a5cb6: Pushed

c68b9f081446: Pushed

98600d12b3d1: Pushed

3d5153698765: Pushed

5222eeb73419: Pushed

8b34f02ac284: Pushed

73046094a9b8: Pushed

authapi: digest: sha256:2630d7f662c5e29de4e3013990488d9e927ecb57b83b999cc28e372b3df0d548 size: 2829

Capture The Flag

The flag will be the number of bits of the resulting digest 2630d7f662c5e29de4e3013990488d9e927ecb57b83b999cc28e372b3df0d548, but in hexadecimal format. The hash is made up of 64 hex characters and we see that they used SHA256 hashing algorithm. SHA256 is a 256 bits long algorithm, as its name indicates. The value 256 in hexadecimal format is 0x100

DevSlopCTF{100}

TODOs API (199 Points)

- The output from the

docker buildcommand shows that the image created was19213d5f426d.

Step 7/7 : CMD ["sh", "-c", "npm start" ]

---> Running in d25ef931c0f1

Removing intermediate container d25ef931c0f1

---> 19213d5f426d

Successfully built 19213d5f426d

- Tag the image with the Amazon ECR registry, repository, and optional image tag name combination to use.

userxx@lsv-u01:~$ docker tag 19213d5f426d 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-todos-api

- Push the image to the

team4-todos-apirepo in AWS ECR.

userxx@lsv-u01:~$ docker push 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-todos-api

The push refers to repository [051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-todos-api]

7ab8cccca71a: Layer already exists

8fa78e380e47: Layer already exists

dab0d31f68cf: Layer already exists

048bb3a54113: Layer already exists

6b73202914ee: Layer already exists

9851b6482f16: Layer already exists

540b4f5b2c41: Layer already exists

6b27de954cca: Layer already exists

latest: digest: sha256:802c12ab8c26a87bbb8e96040373caa1b03e16477653924197704656116b1e8d size: 1992

Capture The Flag

The output above contains information about the image that was just pushed, including the tag of the image, which was replaced by latest. We are to run the tag through an MD5 hash function. We can use CyberChef for that. The flag is the resulting 128-bit hash value 71ccb7a35a452ea8153b6d920f9f190e.

DevSlopCTF{71ccb7a35a452ea8153b6d920f9f190e}

Users API (199 Points)

From the output of docker build, we see our image is 650b405ecff6.

Step 9/9 : CMD ["java", "-jar", "./target/users-api-0.0.1-SNAPSHOT.jar"]

---> Running in 1f29cef84675

Removing intermediate container 1f29cef84675

---> 650b405ecff6

Successfully built 650b405ecff6

Let’s tag the image and push it to the team4-users-api repo.

userxx@lsv-u01:~$ docker tag 650b405ecff6 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-users-api

userxx@lsv-u01:~$ docker push 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-users-api

The push refers to repository [051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-users-api]

3671acbc6b59: Pushed

89d67a3983f8: Pushed

4458a83d565d: Pushed

cba0f06d0372: Pushed

5719b8535421: Pushed

42183e7c207e: Pushed

ceaf9e1ebef5: Pushed

9b9b7f3d56a0: Pushed

f1b5933fe4b5: Pushed

latest: digest: sha256:b98683bc94b3d2bf322106864dba184013f39b823a27262fe5e8616cf4662e09 size: 2204

Capture The Flag

The output above contains information about the image that was just pushed, including the tag of the image, which was replaced by latest. We are to run the tag through an RIPEMD-320 hash function. We can use CyberChef for that. The flag is the resulting 320-bit hash value a3dabeca8436d7dc296f76aac3e573b6337801e57dcc99a3d1c0ce9b26f3df166bbb2a58c0d8d2b3.

DevSlopCTF{a3dabeca8436d7dc296f76aac3e573b6337801e57dcc99a3d1c0ce9b26f3df166bbb2a58c0d8d2b3}

Log Message Processor (199 Points)

The last image we need to push is that of the Log Message Processor. From the output of docker build, we see that the image id is 4144a6eeb4fa.

Step 7/7 : CMD ["python3","-u","main.py"]

---> Running in 6601df6950f1

Removing intermediate container 6601df6950f1

---> 4144a6eeb4fa

Successfully built 4144a6eeb4fa

Let’s tag the image and push it to the team4-log-message-processor repo.

userxx@lsv-u01:~$ docker tag 4144a6eeb4fa 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-log-message-processor

userxx@lsv-u01:~$ docker push 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-log-message-processor

The push refers to repository [051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-log-message-processor]

f8947607aef2: Pushed

5c40e8abb567: Pushed

d9d85f3b96f1: Pushed

064ef86dc94e: Pushed

21bf1f3e46da: Pushed

4ba796985418: Pushed

56a0fed9c27d: Pushed

565113e78b75: Pushed

27448fb13b80: Pushed

1119ff37d4a9: Pushed

latest: digest: sha256:7f5d147e598a8f3ac5cda36f26f77f64c641129192580abbd1131ce9a9f8776b size: 2412

Capture The Flag

The output above contains information about the image that was just pushed, including the tag of the image, which was replaced by latest. We are to run the tag through an Whirlpool hash function. We can use CyberChef for that. The flag is the resulting 320-bit hash value e8fc4fa250e0974faef1212664c143cf1ee2ca052e7155a0ec246a0f2bf90376760f3cb64023af05d7b09ee0760a62bbec0666f7a24c93ed3bcf7ededf325bf4.

DevSlopCTF{e8fc4fa250e0974faef1212664c143cf1ee2ca052e7155a0ec246a0f2bf90376760f3cb64023af05d7b09ee0760a62bbec0666f7a24c93ed3bcf7ededf325bf4}

Deploying microservices to Kubernetes

Introduction (3)

In this section, we will learn how to deploy each microservice to the Kubernetes cluster. We are introduced to the definitions of a pod, deployment, label and namespace. We see an example of a configuration file for a Pod.

apiVersion: v1

kind: Pod

metadata:

name: static-web

labels:

role: myrole

spec:

containers:

- name: web

image: nginx

ports:

- name: web

containerPort: 80

protocol: TCP

We also learn that in the cluster being used for this challenge, there is one namespace per team. Our’s is team4. Every time we deploy a resource during this challenge, we will have to tell Kubernetes to deploy it to our own team’s namespace.

Capture The Flag

This flag is to confirm that we have understood the primer.

DevSlopCTF{ok}

Front-End (398 Points)

We are to create another folder in frontend called k8s to store the configuration files related to the Front-end.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend$ mkdir k8s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend$ cd k8s/

Create a file called deployment.yaml.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ touch deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ ls

deployment.yaml

The requirements for the contents of the configuration file are as follows:

- The configuration file should define a

Deployment. That translates to (kind: Deployment) - The name of the Deployment should be

frontend(name: frontend) - The container should run in your team’s namespace (team4). This will be specified when we run the deployment command.

- There should be one single frontend container running at a time (replica: 1)

- Assign a label to the Pod:

app: frontend. Theselectorfield defines how the Deployment finds which Pods to manage. In this case, you select a label that is defined in the Pod template - When defining a container:

- The name of the container should be

frontend( containers: -> name: frontend) - The image should be downloaded from ECR (the cluster already has permission to do that). From our previous task, the Image location is

051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend. - The container should listen on port 8080 (containerPort: 8080). We are to give a name to this container port. We will name it

name: web. - Finally, set the Pod’s restart policy to

Always. From the official documentation of a pod, we see the option to set therestartPolicyof a pod. We will specifyrestartPolicy: Always.

- The name of the container should be

This is our finalized config file.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ gedit deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: frontend

spec:

replicas: 1

selector:

matchLabels:

app: frontend

template:

metadata:

labels:

app: frontend

spec:

containers:

- name: frontend

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend:frontend

ports:

- name: webport

containerPort: 8080

restartPolicy: Always

Since the image is being pulled from ECR, ensure you are logged in via CLI incase you have issues.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ aws ecr get-login --region us-east-1 --no-include-email

docker login -u AWS -p eyJwYXlsb2FkIjoib1JaUTBycG9zYlRTcityQ1h0TVo5UldiN3N5WEFiMzZQZ3BVUFVFcUI4RkVzMmZ6VDd2ZTRWOTB0T2I3L0lQQUttT1VENW5sc29tSHA4NDdadXpPcEZYTVBSdlRGUzBEcGsvaFVVRjJLSkgyWE1kcmxOc1g1bHh6MG1Ea0lSTEd3YXhZVGUyK2RRWXpyZ0FpeG81dVBUYzIxb2dhRVhYZFoxSWhTKzdWb0pRd3dUdzdyZzJuK0VvODJ2b1N1VjNRbVVIbExHNzV3MGN3U3d2ZVNrdzNJMElTNnlWcTRKMVlVRC9NemduUVp1VXd4d0tlanMvUXRGQ0QzYTN2YndadHdVeDNOYzNaaFcxbGRCMXJOV1FzNk0vRXBoMVdMbHdLMjhEWjJQcTZXTkluUGU3Qm1tbStUVlZ1bVJSRDNxd0twdXV0ZHI4N3A3ZVdsZ2V2bms0QlUzSzRBNTBqeEZGWHZwTWc3aVNCbmI1bEt6NmVGN3Fla3BqRVpyMU1WMFp0VWYvOWUyUXl1VndCZzRvSW5saW5JYUlPbFhYbHJaR2JlKzd3cHg5WFpxdFZld25oWjRzekpsOFpITmJ2ZUs0Uk9LK2lpZ3dkS0UyaDk5VGlHYmVVZHhNam9RYWE4VWVIK0tMNktJQmxBV25YNVlhdHBRbGxTQzh4QVF3N1ltNkpJSnlldVoyaGdSWU5ydEpCY0hIMEJSSmxrZ0NhWm56d1BQZy8yTU9Pc2REK1lkb3p6TmNxMVZjT2VnRE9yQzc3OVRTSGV2V2NqZC9zaTRHWGt3SkRqOWdjc1o0aFBQeWZxYUtwdk9jUENKWklGc3BBQXpLbHByMWQ5ZDhXcHVvS2FiUGhpYTJIZUI1VHRqSkVpWVlhMmhySEEwU01oRGtPNGRhL3M3bEFSZEFrMVJmRVc1cmZGTGFEOTVLOVZBc1ZYN1hpSC9RWGtJM1k1RW53Ym0zNnliY0pKTm42K2dneFhnNXBwMXhBZ09TYUp0UDFRY1NQTVpCK1NwYXYxdCt2K0hNMmdUSXcxQTZIZUxDMk56c3ZXYnlqTENSSW5uVnMvZGNlMnRWeWNLZ3RNdldvcEtWUkZ2V3FiWEllY3JWUXkyNWRaL3MwVHEyZnVGcUNUYllVbkRaenZlV0pILzgxNGhUQ0FyVUZ2c1RtYjhKZkNsUldjT05QV2ZPelpWVzNjT2w3ZFk5cHRoSU10WE9YMWMrLzc5bkpTY0ZFbVZaMjRLTzgyb1dnYmlxSktLc21LYzdsOEZEdjdzRTU0Z1VmRDUxQnF5YkpPN2R2ZytGS0o5L1U1VlI0dFIwcGpVU3k3NVVEZEMwNXJKQXY2TEtTWTRCdUlMWE1kaWNuMTZYaVlBSFVGaGdsa2d4clpqNWw5QW5ocmIzWEo4enhRSGE4R0RYcy9hR2FBajBpbkgzYzVSbTkzbGw5REhvaEN1anRrYnFmRXBpUWNZVTEiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFREs0dkMvRUlwclpJam1nRWhRSUJFSUE3N0J0d3BrMk42eWNyY3pPYTFOa0ZnbXByK3FTaEZvOTBPSXNvYVRtSDZLZ0tJWlBMTjlMb1I1eEc1OElONDZrc3lQdGVpZGR5WVRtUHFSTT0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTM2NzcyNjd9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ docker login -u AWS -p eyJwYXlsb2FkIjoib1JaUTBycG9zYlRTcityQ1h0TVo5UldiN3N5WEFiMzZQZ3BVUFVFcUI4RkVzMmZ6VDd2ZTRWOTB0T2I3L0lQQUttT1VENW5sc29tSHA4NDdadXpPcEZYTVBSdlRGUzBEcGsvaFVVRjJLSkgyWE1kcmxOc1g1bHh6MG1Ea0lSTEd3YXhZVGUyK2RRWXpyZ0FpeG81dVBUYzIxb2dhRVhYZFoxSWhTKzdWb0pRd3dUdzdyZzJuK0VvODJ2b1N1VjNRbVVIbExHNzV3MGN3U3d2ZVNrdzNJMElTNnlWcTRKMVlVRC9NemduUVp1VXd4d0tlanMvUXRGQ0QzYTN2YndadHdVeDNOYzNaaFcxbGRCMXJOV1FzNk0vRXBoMVdMbHdLMjhEWjJQcTZXTkluUGU3Qm1tbStUVlZ1bVJSRDNxd0twdXV0ZHI4N3A3ZVdsZ2V2bms0QlUzSzRBNTBqeEZGWHZwTWc3aVNCbmI1bEt6NmVGN3Fla3BqRVpyMU1WMFp0VWYvOWUyUXl1VndCZzRvSW5saW5JYUlPbFhYbHJaR2JlKzd3cHg5WFpxdFZld25oWjRzekpsOFpITmJ2ZUs0Uk9LK2lpZ3dkS0UyaDk5VGlHYmVVZHhNam9RYWE4VWVIK0tMNktJQmxBV25YNVlhdHBRbGxTQzh4QVF3N1ltNkpJSnlldVoyaGdSWU5ydEpCY0hIMEJSSmxrZ0NhWm56d1BQZy8yTU9Pc2REK1lkb3p6TmNxMVZjT2VnRE9yQzc3OVRTSGV2V2NqZC9zaTRHWGt3SkRqOWdjc1o0aFBQeWZxYUtwdk9jUENKWklGc3BBQXpLbHByMWQ5ZDhXcHVvS2FiUGhpYTJIZUI1VHRqSkVpWVlhMmhySEEwU01oRGtPNGRhL3M3bEFSZEFrMVJmRVc1cmZGTGFEOTVLOVZBc1ZYN1hpSC9RWGtJM1k1RW53Ym0zNnliY0pKTm42K2dneFhnNXBwMXhBZ09TYUp0UDFRY1NQTVpCK1NwYXYxdCt2K0hNMmdUSXcxQTZIZUxDMk56c3ZXYnlqTENSSW5uVnMvZGNlMnRWeWNLZ3RNdldvcEtWUkZ2V3FiWEllY3JWUXkyNWRaL3MwVHEyZnVGcUNUYllVbkRaenZlV0pILzgxNGhUQ0FyVUZ2c1RtYjhKZkNsUldjT05QV2ZPelpWVzNjT2w3ZFk5cHRoSU10WE9YMWMrLzc5bkpTY0ZFbVZaMjRLTzgyb1dnYmlxSktLc21LYzdsOEZEdjdzRTU0Z1VmRDUxQnF5YkpPN2R2ZytGS0o5L1U1VlI0dFIwcGpVU3k3NVVEZEMwNXJKQXY2TEtTWTRCdUlMWE1kaWNuMTZYaVlBSFVGaGdsa2d4clpqNWw5QW5ocmIzWEo4enhRSGE4R0RYcy9hR2FBajBpbkgzYzVSbTkzbGw5REhvaEN1anRrYnFmRXBpUWNZVTEiLCJkYXRha2V5IjoiQVFFQkFIaHdtMFlhSVNKZVJ0Sm01bjFHNnVxZWVrWHVvWFhQZTVVRmNlOVJxOC8xNHdBQUFINHdmQVlKS29aSWh2Y05BUWNHb0c4d2JRSUJBREJvQmdrcWhraUc5dzBCQndFd0hnWUpZSVpJQVdVREJBRXVNQkVFREs0dkMvRUlwclpJam1nRWhRSUJFSUE3N0J0d3BrMk42eWNyY3pPYTFOa0ZnbXByK3FTaEZvOTBPSXNvYVRtSDZLZ0tJWlBMTjlMb1I1eEc1OElONDZrc3lQdGVpZGR5WVRtUHFSTT0iLCJ2ZXJzaW9uIjoiMiIsInR5cGUiOiJEQVRBX0tFWSIsImV4cGlyYXRpb24iOjE2MTM2NzcyNjd9 https://051096112438.dkr.ecr.us-east-1.amazonaws.com

WARNING! Using --password via the CLI is insecure. Use --password-stdin.

WARNING! Your password will be stored unencrypted in /home/userxx/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

We now need to apply the configuration file using the command kubectl apply -f frontend/k8s/deployment.yaml. Since we are already in the path, we do not need the path frontend/k8s/. We need to

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/frontend created

Capture The Flag

Let’s verify the container is up and running using the command kubectl get pods -n <TEAM_NAME>.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl get pods -n team4

NAME READY STATUS RESTARTS AGE

frontend-65c8d8874c-z842x 1/1 Running 0 95s

Let’s dump the logs from the container. We see from the guide provided that we can use the command kubectl logs my-pod .

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl logs frontend-65c8d8874c-z842x -n team4

> frontend@1.0.0 start /usr/src/app

> node build/dev-server.js

[HPM] Proxy created: /login -> http://127.0.0.1:8081

[HPM] Proxy created: /todos -> http://127.0.0.1:8082

[HPM] Proxy created: /zipkin -> http://127.0.0.1:9411/api/v2/spans

[HPM] Proxy rewrite rule created: "^/zipkin" ~> ""

> Starting dev server...

WARNING Compiled with 4 warnings07:58:47

warning in ./src/components/Todos.vue

(Emitted value instead of an instance of Error) Do not use v-for index as key on <transition-group> children, this is the same as not using keys.

@ ./src/components/Todos.vue 6:2-301

@ ./src/router/index.js

@ ./src/main.js

@ multi ./build/dev-client ./src/main.js

warning in ./~/zipkin-transport-http/lib/HttpLogger.js

11:27-34 Critical dependency: require function is used in a way in which dependencies cannot be statically extracted

warning in ./~/zipkin/lib/InetAddress.js

62:23-30 Critical dependency: require function is used in a way in which dependencies cannot be statically extracted

warning in ./~/zipkin-instrumentation-vue-resource/~/zipkin/lib/InetAddress.js

62:23-30 Critical dependency: require function is used in a way in which dependencies cannot be statically extracted

> Listening at http://127.0.0.1:8080

As per the instructions, we do see the line [HPM] Proxy rewrite rule created: "^/zipkin" ~> "". The flag is the string within quotes ^/zipkin" ~> ".

DevSlopCTF{^/zipkin}

Auth API (398 Points)

Let’s create a folder to store our files including the deployment.yaml file.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api$ mkdir k8s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api$ cd k8s/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api/k8s$ touch deployment.yaml

The requirements this time are as follows:

- This configuration file should define a Deployment, and NOT a Pod (kind: Deployment)

- The name of the Deployment should be auth-api (name: auth-api)

- The container should run in your team’s namespace (team4 – This will be specified when we run the deployment command.)

- There should be one single auth-api container running at a time (replicas: 1)

- Assign a label to the Pod: app: auth-api (this is specified under template)

- When defining a container:

- The name of the container should be auth-api

- The image should be downloaded from ECR (the cluster already has permission to do that)

- The container should listen on port 8081 (tip: give a name to this container port)

- Finally, set the Pod’s restart policy to Always

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api/k8s$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: auth-api

spec:

replicas: 1

selector:

matchLabels:

app: auth-api

template:

metadata:

labels:

app: auth-api

spec:

containers:

- name: auth-api

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-auth-api:authapi

ports:

- name: authport

containerPort: 8081

restartPolicy: Always

Next, we need to deploy the Auth API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api/k8s$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/auth-api created

Capture The Flag

We can verify that the pod is running.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api/k8s$ kubectl get pods -n team4

NAME READY STATUS RESTARTS AGE

auth-api-77b95f88c-kmg7v 1/1 Running 0 4m59s

We are instructed to dump the logs of the auth-api container and pay attention to the json payload and specifically this part "echo","file":"[REDACTED]","line".

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/auth-api/k8s$ kubectl logs auth-api-77b95f88c-kmg7v -n team4

{"time":"2021-02-18T08:29:48.507700114Z","level":"INFO","prefix":"echo","file":"proc.go","line":"195","message":"Zipkin URL was not provided, tracing is not initialised"}

____ __

/ __/___/ / ___

/ _// __/ _ \/ _ \

/___/\__/_//_/\___/ v3.2.6

High performance, minimalist Go web framework

https://echo.labstack.com

____________________________________O/_______

O\

⇨ http server started on [::]:44279

The flag is the value for the key file in the JSON payload. Sure enough, we see the "file":"proc.go" message and the redacted part is proc.go.

DevSlopCTF{proc.go}

Users API (399 Points)

We are getting the hang of it now. We simply need to repeat the actions above for the remaining services. Instead of creating a new deployment file with the touch command, I will simply copy the previous one we created and edit based on specifications.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ mkdir k8s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ cd k8s/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api/k8s$ cp ../auth-api/k8s/deployment.yaml .

The requirements this time are:

- This configuration file should define a Deployment, and NOT a Pod (kind: Deployment)

- The name of the Deployment should be users-api (name: users-api)

- The container should run in your team’s namespace (team4)

- There should be one single users-api container running at a time (replicas: 1)

- Assign a label to the Pod: app: users-api (app: users-api)

- When defining a container:

- The name of the container should be users-api (name: users-api)

- The image should be downloaded from ECR (051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-users-api)

- The container should listen on port 8083. Give a name to this container port (name: usersport)

- Finally, set the Pod’s restart policy to Always (restartPolicy: Always)

Our updated deployment file looks like this

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ gedit deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: users-api

spec:

replicas: 1

selector:

matchLabels:

app: users-api

template:

metadata:

labels:

app: users-api

spec:

containers:

- name: users-api

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-users-api

ports:

- name: usersport

containerPort: 8083

restartPolicy: Always

Let’s now deploy the users API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/users-api created

Capture The Flag

Let’s verify that the pod has been created and is running.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ kubectl get pods -n team4

NAME READY STATUS RESTARTS AGE

users-api-5558c96597-rjxmq 1/1 Running 0 47s

We need to dump the logs of this API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/users-api$ kubectl logs users-api-5558c96597-rjxmq -n team4

2021-02-18 08:53:03.724 INFO [bootstrap,,,] 1 --- [ main] s.c.a.AnnotationConfigApplicationContext : Refreshing org.springframework.context.annotation.AnnotationConfigApplicationContext@78e03bb5: startup date [Thu Feb 18 08:53:03 GMT 2021]; root of context hierarchy

//TRUNCATED

2021-02-18 08:53:18.702 INFO [users-api,,,] 1 --- [ main] com.elgris.usersapi.UsersApiApplication : Started UsersApiApplication in 15.703 seconds (JVM running for 16.601)

The logs are a bit chatty but at the bottom, we see the entry at the end. The flag is the string that has been replaced with [REDACTED] in the statement Started [REDACTED] in XXXX seconds (JVM running for XXXX). We see the redacted word is UsersApiApplication.

DevSlopCTF{UsersApiApplication}

TODOs API (400 Points)

Let’s repeat the actions above for the Todo service. Instead of creating a new deployment file with the touch command, I will simply copy the previous one we created and edit based on specifications.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api$ mkdir k8s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api$ cd k8s/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ ls

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ cp ../../auth-api/k8s/deployment.yaml .

The requirements this time are:

- This configuration file should define a Deployment, and NOT a Pod (kind: Deployment)

- The name of the Deployment should be todos-api (name: todos-api)

- The container should run in your team’s namespace (team4)

- There should be one single todos-api container running at a time (replicas: 1)

- Assign two labels to the Pod: app: todos-api and redis-access: "true"

- When defining a container:

- The name of the container should be todos-api (name: todos-api)

- The image should be downloaded from ECR (051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-todos-api)

- The container should listen on port 8082. Give a name to this container port (name: todosport)

- Finally, set the Pod’s restart policy to Always (restartPolicy: Always)

This is what we get

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ gedit deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: todos-api

spec:

replicas: 1

selector:

matchLabels:

app: todos-api

redis-access: "true"

template:

metadata:

labels:

app: todos-api

redis-access: "true"

spec:

containers:

- name: todos-api

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-todos-api

ports:

- name: todosport

containerPort: 8082

restartPolicy: Always

Let us deploy the Todo API.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/todos-api created

Capture The Flag

Let us verify that the pod is up and running then dump the logs.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ kubectl get pods -n team4

NAME READY STATUS RESTARTS AGE

todos-api-5787675bc6-qw4cw 1/1 Running 0 4m38s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ kubectl logs todos-api-5787675bc6-qw4cw -n team4

> todos-api@1.0.0 start /usr/src/app

> nodemon server.js

[nodemon] 1.19.4

[nodemon] to restart at any time, enter `rs`

[nodemon] watching dir(s): *.*

[nodemon] watching extensions: js,mjs,json

[nodemon] starting `node server.js`

todo list RESTful API server started on: 8082

We are to pay attention to the string [REDACTED] starting node server.js. We see that the flag maps to nodemon.

DevSlopCTF{nodemon}

Log Message Processor (400 Points)

The last service to deploy is the Log Message Processor. Same thing applies here. Lets create the folder and copy over the deployment file so that we can edit is.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/todos-api/k8s$ cd ../../log-message-processor/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor$ mkdir k8s

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor$ cd k8s/

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ cp ../../auth-api/k8s/deployment.yaml .

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ gedit deployment.yaml

The requirements this time are:

- This configuration file should define a Deployment, and NOT a Pod (kind: Deployment)

- The name of the Deployment should be log-message-processor (name: log-message-processor)

- The container should run in your team’s namespace (team4)

- There should be one single todos-api container running at a time (replicas: 1)

- Assign two labels to the Pod: app: log-message-processor and redis-access: "true"

- When defining a container:

- The name of the container should be log-message-processor (name: log-message-processor)

- The image should be downloaded from ECR (051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-log-message-processor)

- The container should NOT listen on any port (let’s remove the contents of ports:)

- Finally, set the Pod’s restart policy to Always (restartPolicy: Always)

The resulting deployment file is below

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ gedit deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: log-message-processor

spec:

replicas: 1

selector:

matchLabels:

app: log-message-processor

redis-access: "true"

template:

metadata:

labels:

app: log-message-processor

redis-access: "true"

spec:

containers:

- name: log-message-processor

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-log-message-processor

restartPolicy: Always

Finally, let’s deploy it.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/log-message-processor created

Capture The Flag

Let’s verify the status of the Pod.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ kubectl get pods -n team4

NAME READY STATUS RESTARTS AGE

log-message-processor-78b6668c64-r67cz 0/1 CrashLoopBackOff 1 12s

We see that the pod is not running and that we hit the CrashLoopBackOff status. This is expected as per the instructions and will be fixed later.

Let’s analyze the logs for this pod to see the error.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/log-message-processor/k8s$ kubectl logs log-message-processor-78b6668c64-r67cz -n team4

Traceback (most recent call last):

File "main.py", line 16, in <module>

redis_host = os.environ['REDIS_HOST']

File "/usr/local/lib/python3.6/os.py", line 669, in __getitem__

raise KeyError(key) from None

KeyError: 'REDIS_HOST'

The flag is the string that has been replaced with {REDACTED} in the line KeyError: '{REDACTED}'. In this case, the value is REDIS_HOST.

DevSlopCTF{REDIS_HOST}

Configuring Environment Variables with ConfigMaps and Secrets

In this section, we will be dealing with ConfigMaps and Secrets which enable us to provide a few environment variables to each microservice so they can function correctly.

Introduction (5 Points)

We see a sample showing how to add variables to a container using the env parameter. We are advised to use ConfigMaps and Secrets to do so. The difference being that ConfigMaps are used to store non-confidental data, while Secrets are used for sensitive information.

apiVersion: v1

kind: Pod

metadata:

name: envar-demo

labels:

purpose: demonstrate-envars

spec:

containers:

- name: envar-demo-container

image: gcr.io/google-samples/node-hello:1.0

env:

- name: DEMO_GREETING

value: "Hello from the environment"

- name: DEMO_FAREWELL

value: "Such a sweet sorrow"

Capture The Flag

Let’s confirm that we do know what ConfigMaps and Secrets are.

DevSlopCTF{ok}

Front-end (250 Points)

The Front-end needs 3 environment variables to function correctly, none of which is confidential. For that case, we need to use ConfigMap to render those variables.

- AUTH_API_ADDRESS: The address of the Auth API

- PORT: The port where the Front-end should listen to traffic on

- TODOS_API_ADDRESS: The address of the TODOs API

Let’s create a file called configmap.yaml based on the requirements below. We can get a sample of a ConfigMap from the official documentation here

- The name of the ConfigMap should be frontend (name: frontend)

- The ConfigMap should define 3 key-value pairs (data:):

- AUTH_API_ADDRESS: http://auth-api:8081

- PORT: 8080

- TODOS_API_ADDRESS: http://todos-api:8082

Here is the ConfigMap I used

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ gedit configmap.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ cat configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: frontend

data:

AUTH_API_ADDRESS: http://auth-api:8081

PORT: "8080"

TODOS_API_ADDRESS: http://todos-api:8082

Let’s deploy the configmap and verify that the Front-end has been created successfully and it defines 3 key-value pairs.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl apply -f configmap.yaml --namespace=team4

configmap/frontend created

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl get configmap frontend -n team4

NAME DATA AGE

frontend 3 60s

We now need to ensure that the deployment.yaml file calls the contents of the ConfigMap when the pods are deployed. We can use the example from the official documentation here to make the changes in the deployment file.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ gedit deployment.yaml

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: frontend

spec:

replicas: 1

selector:

matchLabels:

app: frontend

template:

metadata:

labels:

app: frontend

spec:

containers:

- name: frontend

image: 051096112438.dkr.ecr.us-east-1.amazonaws.com/team4-frontend:frontend

ports:

- name: webport

containerPort: 8080

env: # this is how we specify the environmental variables

- name: AUTH_API_ADDRESS

valueFrom:

configMapKeyRef:

name: frontend # the name of out ConfigMap is frontend

key: AUTH_API_ADDRESS

- name: PORT

valueFrom:

configMapKeyRef:

name: frontend # the name of out ConfigMap is frontend

key: PORT

- name: TODOS_API_ADDRESS

valueFrom:

configMapKeyRef:

name: frontend # the name of out ConfigMap is frontend

key: TODOS_API_ADDRESS

restartPolicy: Always

Let’s update the deployment and double-check that all is well.

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl apply -f deployment.yaml --namespace=team4

deployment.apps/frontend configured

userxx@lsv-u01:~/Downloads/Kubernetes-ctf/frontend/k8s$ kubectl get pods -n team4